Building for Every Language

The second post in the Discourse Without Borders series.

Hello again 👋

If you're a history buff, this is the blog post for you. It covers how multilingual support at Discourse evolved over 13 years, with some honest behind the scenes.

In the last post, I talked about why we believe every community should be able to welcome people in any language. This one is about what we've done about it, and how long it took us to get serious.

(no i18n)

20 locales

plugin created

74 languages

replace APIs

from translation

cost: pennies

Act 1: "Give internationalization (i18n) proper love" (2012–2016)

In June 2012, three people sat down and wrote the founding goals for Discourse. Eleven bullet points covered open source, easy deployment, rethinking forum UX, mobile-first, real-time updates, touch support, data portability. i18n wasn't on the list and the internet they were building for spoke English.

We didn't prioritize multilingual... but our community did!

Within months of those goals being published, people started asking. One of the earliest replies to the mission goals topic was from a community member who'd clearly been holding his breath:

"Give internationalization proper love and the product will spread across the world!"

He pointed to WordPress's localization workflow as the gold standard. Jeff's response was characteristically casual: "There is a string file, and we try very hard to put all text through it — just edit it for your language!"

Narrator: it was not that simple.

The community pushed back. How would translators know which strings changed between versions? How would quality control work? Meanwhile, it was discovered that Welsh has six forms of pluralization (and Arabic too, as I later discovered) and the complexity of real i18n started to sink in.

By 2014, roughly 20 community-contributed localizations existed, and we adopted a translation platform. Neil, one of the first engineers in Discourse, saw 19 pending translator requests on the first day. One contributor, after a year of manually comparing git commits for the Dutch translation, was just relieved: "I'm happy to use the new tool." Another joked about submitting English (UK) translations. How the turn tables...

In February 2015, a community member proposed inline post translation (translating content, not just the UI) referencing Facebook's approach and asking for a "See translation" button on every post. Six months later, the discourse-translator plugin was born with support for Microsoft, Google, and Yandex, translating each post once per locale to save money.

Then came the goat farmers. 🐐 🐐 👩🏻🌾

In 2016, a community member running a bilingual goat farming forum (Ukrainian and English) posted a detailed proposal for collapsible language sections. His community had discovered that English-speaking goat farmers had great interest in how Ukrainian goat farmers make cheeses, and vice versa for professional breeding. They were running manual bilingual Q&A sessions because Google Translate was "a disaster" for their professional terminology. He wanted collapsible sections, a language switcher, translatable category names.

Our team was upfront about it, dealing with language headers for anonymous users makes caching an absolute nightmare. "We weren't able to even brainstorm a way of doing it at all."

So that was the state of things. Discourse did UI localization pretty well. The community wanted content translation. And for years, the answer was "here's a plugin" that worked but didn't go very far.

Act 2: The Plumbing (2017–2020)

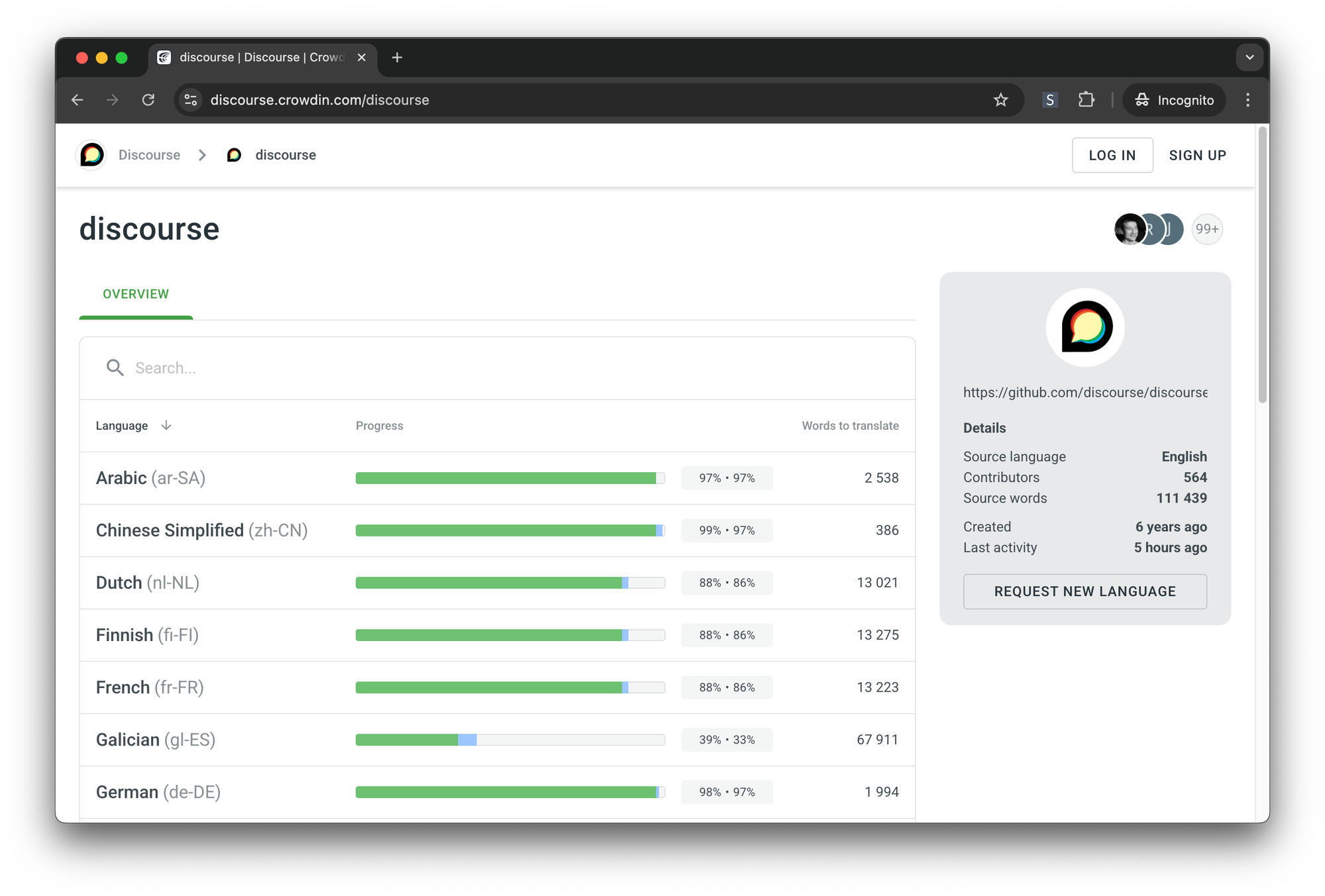

In 2017, we published the first official guide for structuring multilingual communities on meta. The recommended model included language-specific categories, language tags, running separate instances, and revealed how manual the approach still was. Then came the Crowdin migration.

By early 2020, our localization infrastructure was held together with duct tape and workarounds. We were using a forked copy of i18n.js so old nobody remembered who'd forked it. MessageFormat.js was v0.1.5, seven years out of date. Our internal tooling was workarounds stacked on workarounds for translation platform bugs.

We'd originally chosen our platform because we thought it was free for open source. It... turned out not to be.

Crowdin offered us a deal and we took it. We migrated 74 languages, built custom automation with Jenkins, and finally had infrastructure that didn't fight us. The community reaction on meta was mostly excited, though one contributor raised a very real concern about quality control, recounting how a Spanish "translation vigilante" had once gone rogue "translating any sentence left and right without consulting the other translators." Fair.

What mattered then in 2020 was that a very low percentage of customers used a non-English locale. It was hard to justify doing more.

Act 3: Reddit Called (Late 2024)

For a few years, multilingual was in a holding pattern. The infrastructure was better, but it was still hard to justify the work. Sam saw a mixed Japanese/English gaming topic and thought "this is SUCH a missed opportunity", but it stayed in the "someday" bucket.

Then in October 2023, Sam made a prediction: "all translation APIs will die and be replaced with LLMs." His vision was a "magical babel fish mode where you select your language and we just auto translate everything." Looking back at this point, I’m reminded once again that Sam is (almost 😅) always right.

In October 2024, Gerhard searched for "discourse hosting" from Austria. When Google switched to German results, Discourse disappeared completely. Sam's reaction: "There are 66 million people in France; Australia has roughly a third of that population. In countries like Japan and Korea, you don't even exist unless you have a localized site."

A month later, Reddit's Q3 2024 earnings came out and machine translation had driven 4x incremental daily active users with up to 40% of daily traffic coming from Google. Falco shared that his wife had never used Reddit because of the language barrier, but she'd already installed the app because everything was showing in Portuguese for her now. That one hit home.

And the cost math was wild.

| Google Cloud Translation | Gemini 2.5 Flash-Lite | |

|---|---|---|

| Price | $20 per million characters | ~$0.10 input + $0.40 output per M tokens |

| 1,500 posts into 4 languages | ~$180 | ~$1.26 |

| Per post | ~3 cents | ~0.02 cents |

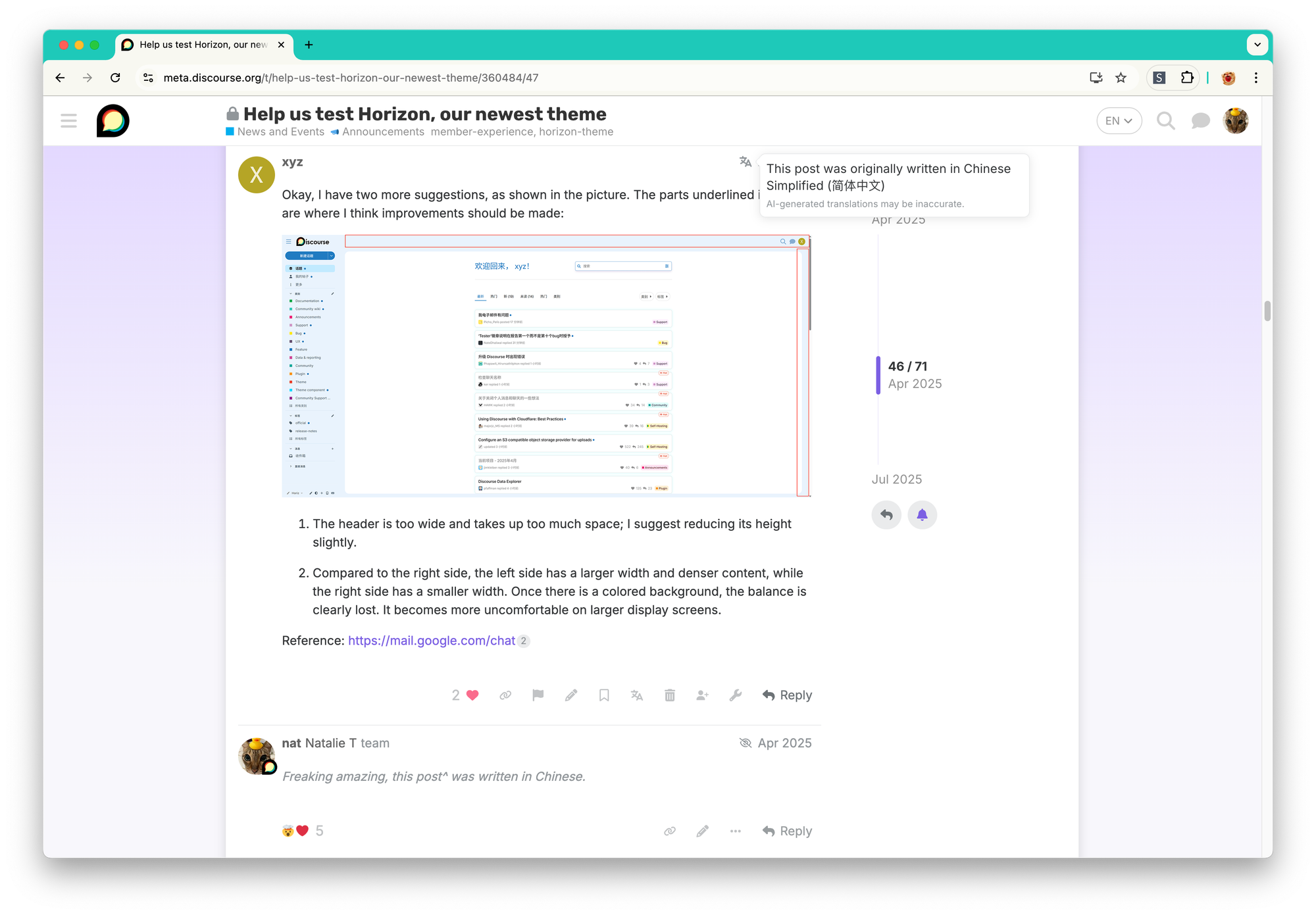

The engineer who'd originally *built* the translator plugin years ago, TGX (tgxworld), tested AI translation to Chinese and declared it 🤌.

Act 4: From POC to Production (2025–2026)

In January 2025, we studied Reddit's translation implementation and built a working proof of concept with a ?lang=ja parameter. Work 'started' on February 10th and by February 14th we were live on meta, with automatic translations to English enabled and backfill running at 1000 posts per hour.

Then came the three homes problem. The translation feature moved through *three* codebases in four months: the translator plugin, Discourse core, and discourse-ai. On June 4th, I announced what was simultaneously a triumph and a comedy: "All translations are now in discourse-ai; discourse-translator is now 100% irrelevant" 😭😂

There were some fun debates along the way. Should we use flags for languages? One colleague asked "Which country is Arabic? Spanish?" and that ended it, we went with language codes. A Canadian team member pointed out that in Canada, showing a US flag for English and a France flag for French would be "very, very unusual."

Should we brand it as AI translation? I shared that some customers have explicit anti-AI policies and it'd be friction (things have changed since then). Sam preferred to put AI front and centre, while another colleague offered the diplomatic "All translation services are beautiful," then someone posted the Chill Guy meme, and we went with AI front and centre.

Translation is genuinely hard and there are some great moments from our community to prove it. A translation agency we hired once translated "Summary" as "Спойлер" (Spoiler) in Russian, and turned email placeholders like from@example.com into Cyrillic: от@gsp-пример.ком. Our Portuguese translators spent an entire meta topic debating how to translate the word "post" because Portuguese doesn't really have one. "Publicação" (publication) is too formal, "poste" (transliteration) is controversial, and "mensagem" (message) conflicts with personal messages. It got serious enough that a linguist appointed by the Portuguese government weighed in. And when those same translators had to deal with "dropdown menu," the official translation was "lista pendente" (pending list). One translator said "it sounds so wrong" and the other replied "I know... I would actually choose 'dropdown' without translating at any time."

The funniest one to date for me personally, was when Moin, a German user on meta, pointed out to me that our “composer” was translated to ”the person who writes music”. 🤣

Multilingual search is still on the roadmap and we think multilingual embeddings are the right answer since our existing embeddings on meta are already multilingual.

By October 2025, Falco posted what might be my favourite summary of the whole journey: "We now occupy double the space on Google searches, much like Reddit does. This is available to all customers, and cost pennies."

Then in December 2025, Google started forcing localized search results based on geography. If you search in English from Brazil, Google prioritizes Portuguese results on the first page. Translation went from "nice to have" to essential for search visibility.

The feature documentation went live on meta in July 2025 covering manual and automatic translations, category and topic localization, language switcher, crawler support, and hreflang tags, all available on every plan. 🎉

And then the cherry on top for me was a Chinese-speaking user started making suggestions on meta entirely in Chinese, not asking about translation, just using meta naturally in their language because the feature made it possible.

Our multilingual team and community

A third of our team live in places where English isn't even the main language, and half of our top ten customer countries don't use English as a first language either. When we built the language switcher, the translation prompts, the hreflang tags, there was no guessing needed as we were building it for ourselves and our customers.

And then there's our community who've kept translations alive for years before any of the AI stuff happened. There are so many people, but to name just two I've interacted with: Tomas maintaining Czech translations for years (and topping our Crowdin leaderboard for a while), and Moin catching more translation bugs than most of us have filed in total. That kind of thing isn't in any spec and we only know it if we live it.

And Gerhard. 🤗 Gerhard is one of our relentless engineers who has single-handedly managed Discourse's entire translation infrastructure. If you're using Discourse in any language other than English, he is a big reason why.

Penar had put it best: "Yesterday, you had to speak English to participate on meta. Today, with automatic translation it's available to you in French, Portuguese, Spanish. It is brilliant!"

Next up in Discourse Without Borders: That's how we built it. But what does it actually feel like to use Discourse in another language?

Comments